Ideal Automated Technological Solutions to Metrology-Related Quality Challenges

Welcome to the MOX white paper series discussing ideal automated technological solutions to metrology-related quality challenges. As the first in the series, this document will help you understand the critical factors that drive cost-effective test and measurement quality and, in turn, long-term productivity and profitability. You will also see how the appropriate metrology engineering tools facilitate that success.

Measurements May Enhance or Threaten Profitability

From field operations to the manufacturing floor through the calibration laboratory to national measurement standards, measurements inform decisions: Drill for oil here or there? Accept, rework or reject product? Adjust an instrument during calibration or not? Report an instrument in- or out-of-tolerance (OOT)?

Whenever business and quality decisions depend on measurements—more and more often in our increasingly technological society—the business’s bottom line depends on measurement quality. Correct decisions increase profit, whereas incorrect decisions incur costs. High-quality test and measurement processes yield correct decisions.

National and international quality standards [1]-[3] specify various metrics that quantify measurement quality. These measurement-quality metrics (MQMs) [4] include test-accuracy ratios, test-uncertainty ratios, measurement reliability, false-accept risk, and false-reject risk. Other papers dive deeper into MQM details; here we just note that all MQMs depend on tolerance specifications (decision limits) and measurement uncertainty. Measurements (test points) all have varying specifications and uncertainties, so each carries its own MQMs.

To demonstrate acceptable MQMs, test and measurement organizations therefore maintain detailed uncertainty budgets—a problematic and costly proposition. Besides the initial engineering investment, continually changing measurement systems and processes require updates to numerous uncertainty budgets for every change. This problem grows enormously if an organization wishes to rigorously customize an uncertainty budget for every test point in their workload. In other words, managing the quality infrastructure to produce profit around correct measurement decisions incurs a significant cost of its own.

Critical Cost Factors

To understand measurement support costs, consider the management system’s principal components:

- Documents and procedures: quality program, measurement procedures, datasheets, spec sheets, etc.

- Workload management: assets, customers, vendors, work orders, traceability, invoicing, etc.

- Data acquisition systems: test points, specifications, instrument control software, etc.

- Measurement analysis: uncertainty, MQMs, guard banding, OOT determination, intervals, etc.

These key components create value for the business: tested products, calibrated instruments, measurement certificates, accreditation scopes, etc. The components and value streams all revolve around individual test points, of which an organization may have tens of thousands to manage.

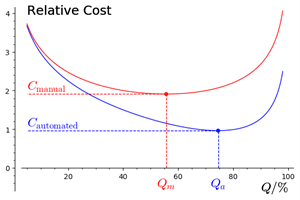

Businesses strike a balance between quality and support cost determined by their available technology. While organizations may tackle any or all of these processes manually, automation vastly improves the quality-cost relation, as Figure 1 illustrates. Consequence costs dominate to the left, low-quality side and support costs dominate to the right, high-quality side, with an optimum operating point somewhere between them. Unless it improves its technology, a business remains confined to a single quality-cost curve.

The market offers many dedicated software solutions for each of these major components: document configuration management systems, laboratory information management systems, measurement automation environments, and measurement analysis packages. These silo components, however, depend heavily on each other in practice, and on much of the same data. All for example, utilize the same specification and test-point information, forcing users to redundantly copy and maintain data in multiple places, inviting errors and wasting resources.

Furthermore, while special analysis software will facilitate uncertainty budgeting and reduce the calculation burden, these solutions typically represent an entire measurement area, say DC voltage or pneumatic pressure, with a single simplified budget disconnected from routine work. However, the measurement details vary in practice, so the as-measured and as-documented uncertainties always differ. Without a cost-effective way to validate the actual work done, test point by test point, measurement quality suffers. A representative budget either conservatively inflates your uncertainties (costing you market share and requiring more expensive equipment), or your work goes to customers with worse MQMs than you reported or documented (incurring rework, audit findings and customer dissatisfaction).

Three critical cost factors therefore emerge: 1) automation, 2) integration, and 3) test-point-level quality control. Without an automated, integrated, test-point-level methodology, the quality-cost balance tilts toward a lose-lose compromise.

The MOX Philosophy

Why not strategically integrate all measurement quality management components within a single architecture? An ideal technological solution would manage all the uncertainties, link them to all applicable measurements, calculate MQMs, and validate measurement quality for every test point reported. In other words, encapsulate and integrate document, workload, data acquisition and analysis software so that businesses get the best of both worlds: low cost, easy maintenance and fully automated quality validations.

MOX brings such a win-win integrated management solution to your business. No manual calculations, no redundant entries, no copying from one system to another, no painful uncertainty propagation when changes arise, no conflicting tolerances from system to system. Instead, you get as-designed and as-measured uncertainty budgets by test point at your fingertips. This new technology creates a fresh paradigm that shifts the cost-quality trade-off to a whole new performance region.

Conclusion

Profitable business decisions require validated high-quality measurement at an affordable cost. Automated, integrated, powerful measurement quality meets that requirement. Automation reduces labor costs and improves quality. Powerful analytics down to the test-point level provide the most competitive technological edge. Integration ices the cake by firmly connecting your abstract quality program to your concrete workload—say what you do and do what you say—the basic quality tenet, ensuring consistency, high quality and increased productivity.

References

- [1] General requirements for the competence of testing and calibration laboratories, International Organization for Standardization (ISO) Standard ISO/IEC 17 025:2017, Third edition, 2017. [Online]. Available: https://www.iso.org/standard/66912.html

- [2] Requirements for the Calibration of Measuring and Test Equipment, NCSL International (NCSLI) Standard ANSI/NCSL Z540.3-2006 (R2013), Rev. R2013, 2013. [Online]. Available: https://www.ncsli.org/iMIS/Store/Store Home.aspx

- [3] Evaluation of measurement data—The role of measurement uncertainty in conformity assessment, Joint Committee for Guides in Metrology (JCGM) Guidance Document JCGM 106:2012, First edition, 2012. [Online]. Available: https://www.bipm.org/en/publications/guides/

- [4] H. Castrup, “An examination of measurement decision risk and other measurement quality metrics,” in Proc. NCSL Int. Workshop & Symposium. San Antonio, TX: NCSL International, July 26-30 2009. [Online]. Available: https://www.ncsli.org/iMIS/Store/Store Home.aspx

- [5] Evaluation of measurement data – Guide to the expression of uncertainty in measurement, Joint Committee for Guides in Metrology (JCGM) Guidance Document JCGM 100:2008, First edition, 2008. [Online]. Available: https://www.bipm.org/en/publications/guides/